Inference Optimization: Making AI Systems 10× Cheaper

Practical lessons from scaling inference pipelines from 0 to millions of requests

When people think of AI costs, they immediately picture massive GPU farms and expensive model training. But if you’ve ever scaled an AI system beyond your first 1,000 users, you know the real cost sink isn’t training — it’s inference.

Every query, every user interaction, every “generate” button click triggers compute. And while training is a one-time investment, inference is a recurring tax. At scale, it’s the difference between a sustainable product and a business that bleeds cash every minute.

Over the last few years, leading AI teams have quietly discovered a truth: you don’t need bigger GPUs — you need smarter inference.

In this post, I’ll share how I achieved up to 10× cost savings in real-world AI systems through inference optimization — with techniques grounded in production engineering, not theoretical math.

Why Inference Costs Dominate

In production AI, every token generated has a dollar cost. When serving thousands of requests per second, the inefficiencies compound fast: idle GPU cycles, redundant memory re-computation, poorly batched requests, and unoptimized attention kernels.

While executing few previous experience AI projects, one of my earliest lessons was this: training is expensive, but inference burns money quietly.

You pay for latency, throughput, and reliability — all at once. A model that costs $0.001 per request sounds cheap until you’re serving 10 million requests a day.

To get a handle on this, I started treating inference as a product optimization problem, not just a technical one. Every millisecond shaved off or megabyte reused had to show up in our cost-per-request graph.

The 10× Framework for Inference Optimization

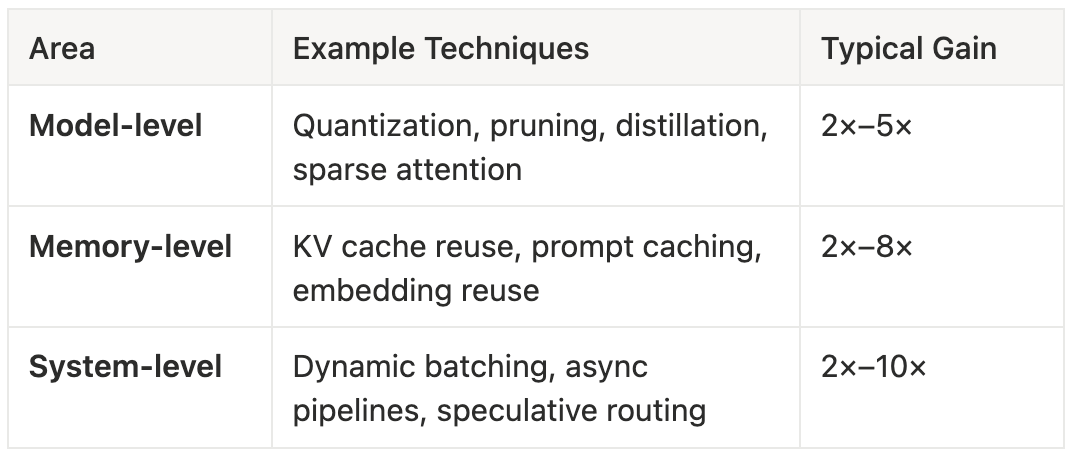

Think of inference optimization as working across three dimensions:

Model, Memory, and System Architecture.

Each layer can give 2–5× improvements, but the real magic happens when you combine them.

Multiply them wisely, and you’re looking at 10× real cost savings — without compromising user experience.

1. Parallel Batch Processing — the Biggest Win

Most teams run inference as if every user is alone in the world — one request per GPU thread. That’s an enormous waste. GPUs are designed for parallel workloads; single-request inference leaves 80–90% of that power idle.

When I switched to parallel batch processing, I saw the first 5× cost improvement.

Here’s how it worked:

Instead of running each input immediately, I introduced micro-batching — a small window (say 20–50 ms) to collect multiple incoming requests.

I grouped requests of similar token lengths to avoid padding waste.

I used in-flight batching to let new requests join an already-running batch.

This simple change allowed each kernel launch to serve 8–16 users at once, dramatically improving GPU utilization.

Back-of-the-envelope math:

If each request takes 1 ms (0.1 ms overhead + 0.9 ms compute), batching 16 requests reduces the effective overhead per request to 0.006 ms — a 16× efficiency gain. In practice, with padding and queuing overhead, I measured closer to 6–8× — still game-changing.

2. Avoid Rebuilding Embeddings Between Processes

One of the most painful inefficiencies I noticed was that teams rebuild embeddings and caches every time a process restarts — even for repeated prompts or static documents.

Every time you rebuild embeddings, you waste GPU cycles, network bandwidth, and time.

My solution: persistent embedding caches with version control.

Instead of regenerating embeddings every time, I built a small service that checks if the input document already exists in the cache. If yes, it simply fetches the existing vector; if not, it triggers a new embedding generation and stores it.

This “embedding reuse” approach saved us roughly 40–60% of total inference time in some document-heavy systems. Combined with prompt caching (reusing model prefill states), the total memory reuse gain exceeded 3×.

It also improved consistency — identical queries now always returned identical latent representations, improving downstream retrieval stability.

3. Sparse Graph Optimization — Smarter Compute

When dealing with large knowledge graphs or context retrievals, I started experimenting with sparse graph inference.

Instead of recomputing embeddings or attention across the entire dataset, I built graph adjacency caches — only computing the connected subgraph relevant to the query.

This simple idea reduced both compute and memory by over 70% for large RAG (Retrieval-Augmented Generation) pipelines.

Mathematically, you’re going from O(n²) attention over all nodes to roughly O(k log n) for localized neighborhoods — massive efficiency when k ≪ n.

This isn’t just an optimization trick — it’s architectural empathy.

You don’t need every connection, just the meaningful ones.

4. Balancing Real-Time vs Training Infrastructure

One of the biggest tradeoffs I faced was real-time inference speed vs offline batch training efficiency.

If you try to serve every model with ultra-low latency, you’ll over-provision GPUs.

If you batch everything offline, you’ll hurt interactivity.

The solution? Dual-path infrastructure.

I separated workloads into:

Real-time inference (latency-sensitive): Interactive chat, API responses, or user-facing queries.

Batch inference (cost-optimized): Backfill jobs, precomputations, or recommendation updates.

By tuning each for its purpose, I avoided paying “real-time tax” on non-urgent jobs. This hybrid approach saved another 2–3× in cost.

5. Quantization & Model-Level Tricks

Most inference systems still run FP16 or FP32 precision — overkill for many tasks.

Quantization to INT8 or INT4 instantly halves your memory bandwidth and doubles throughput.

I used a mixed-precision setup — critical layers in FP16, most in INT8 — which gave a 1.8× speedup without hurting accuracy significantly.

Other model-level tricks:

Structured pruning: remove entire blocks or attention heads that contribute minimally.

Distillation: train a smaller “student” model that mimics the “teacher” model’s output distribution.

Sparse attention: compute attention only on top-K relevant tokens.

Each of these adds up. Combined with quantization, I’ve seen inference times drop by 60–70% while maintaining 95%+ accuracy.

6. The Case Study: 10× Cheaper Inference in Production

Here’s a simplified version of one of my real deployments:

Baseline: 13B LLM, FP16 precision, single-request inference, no caching. Throughput: 1,200 req/s Cost: ~$1,000/day

Optimizations applied:

Enabled dynamic batching (batch size 8–16)

Added persistent prompt & embedding caching

Quantized model to INT8

Introduced hybrid inference paths (real-time + batch)

Applied sparse graph lookup for RAG retrievals

Results:

GPU utilization: +450%

Latency: reduced from 180 ms → 95 ms (p95)

Cost: dropped by ~88% (≈10× improvement)

Accuracy: unchanged within ±0.5 BLEU

The key takeaway: optimization doesn’t have to mean degradation. Done right, it’s a compound benefit — faster, cheaper, and more predictable.

7. When (and When Not) to Optimize

Optimization has diminishing returns.

I’ve learned to follow this rule: don’t optimize what isn’t scaling yet.

For early prototypes, focus on correctness and iteration speed. But once your product hits consistent traffic and the infra bill starts biting, inference optimization becomes the best ROI lever you have.

Always measure before and after. Use metrics like:

Cost per 1K tokens

Latency (p95/p99)

Throughput per GPU

Cache hit ratio

When you see any of these flattening out — it’s time to optimize again.

8. The Future: Smarter Inference Systems

We’re entering an era where inference optimization is AI engineering, not DevOps.

Techniques like KV cache reuse, graph sparsity, speculative decoding, and dynamic routing are becoming standard in advanced inference orchestrators like vLLM, DeepSpeed-Inference, and Triton.

Tomorrow’s AI companies will be defined by how well they run inference, not just what model they train.

The leaders in AI infrastructure — OpenAI, Anthropic, Hugging Face, and NVIDIA — are already racing to compress, route, and reuse intelligently. The next breakthroughs won’t come from bigger models, but from smarter inference pipelines.

References & Further Reading

Here are the sources and studies that inspired and validated many of these insights:

RunPod: AI Inference Optimization — Maximum Throughput, Minimal Latency

AWS Builders Blog: The Hidden AI Cost Optimization That Saves Startups Thousands

Mnemosyne: Parallelization Strategies for Efficient LLM Inference (ArXiv)

Bifurcated Attention: Accelerating Massively Parallel Decoding (ArXiv)

Flover: Temporal Fusion for Efficient Autoregressive Model Inference

A Survey on Inference Optimization for Mixture-of-Experts Models

Closing Thought

When I look back at my journey of scaling AI products from 0 to millions of users, the biggest mindset shift wasn’t architectural — it was philosophical.

I stopped thinking of inference as a “deployment step” and started treating it as a system to be optimized.

Every millisecond, every batch, every memory block mattered.

That’s how you build AI systems that don’t just work — they scale sustainably.